How knowledge is born: putting data in context

Data is transforming biology and medicine. In many biomedical domains we have more data every year than collectively ever before – the consequence of exponential growth.

Yet data is not knowledge. And growing knowledge has proven a lot more challenging than sheer data accumulation.

Finding new drug targets, biomarkers, and personalized treatment strategies will incresingly depend on our ability to translate data to knowledge. Can we do this? Can we do this more effectively?

Why doesn’t the rate of knowledge growth reflect in any way the (exponential) rate of data accumulation?

Context

We think the answer lies in context, or really the lack of it. Here is why.

Imagine you are analyzing data at pharmaceutical company or a research lab. Your new data suggests that gene X is aberrantly activated in disease Y and you think that X might be a possible target for Y. You want to quickly find out if the gene has been linked to disease Y in literature. Is X genetically linked to Y? Is there other data in cell lines, model organisms or human that can support your finding? What are the downstream pathways for X, what is it regulated by and what are its interactors? What drugs target gene X, what might be the molecular and phenotypic consequences of inhibiting X, and so on…

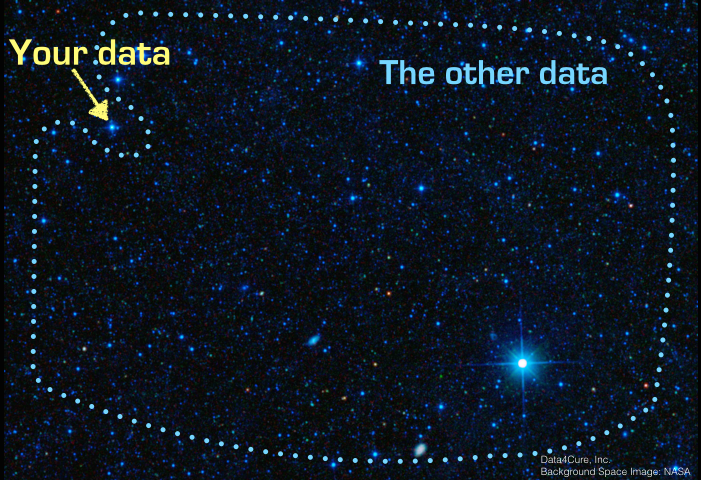

Now imagine your data suggests 1000 aberrantly activated genes. What you’ll likely see is that the time spent on your initial analysis (i.e. identifying the 1000 aberrantly regulated genes) is a fraction of what it takes to try to make sense of the data in the context of all other data and results. And you are never really done. In a rapidly advancing field prior knowledge is always changing and context needs constant updates.

Data just doesn’t come with this kind of rich context included. We need to make the right connections to prior knowledge, other datasets and other results. By doing so we develop an understanding of how our result fit in, what novel insights they provide, and how they change the current state of knowledge. That’s how most new knowledge is born.

Building Biomedical Intelligence

What if we could automate this process? What if data could automatically connect to literature, other relevant datasets and prior knowledge?

At Data4Cure we developed an advanced dynamic ontology platform that puts data into context making it possible to find connections and rapidly grow knowledge from large biomedical datasets.

Our platform is based on a dynamic biomedical ontology – the CURIE Knowledge Graph – and a growing set of applications that continuously extract, annotate and integrate contextual information from various sources. These applications

1. gather, import and process vast amounts of molecular and clinical data, including genome-wide DNA, RNA, epigenetic, and proteomics profiles,

2. ingest reference databases, including databases of clinically-associated variants, genotype-phenotype, drug-disease, and drug-target associations,

3. crawl and parse through millions of scientific papers and clinical trials to mine meaningful relationships and information from free text,

4. aggregate molecular and clinical information with genome-wide molecular networks and pathways to identify network/pathway-level activities and aberrations.

The resulting contextual data and results flow into the CURIE Knowledge Graph which provides a search engine for end users and an API used by all other applications on the platform.

Across a wide range of disease areas and applications, Biomedical Intelligence applications use biology-informed statistical and algorithmic priors to effectively aggregate signal and filter out biological and technical noise. Together, with the CURIE Knowledge Graph they comprise the Data4Cure Biomedical Intelligence Cloud.

In subsequent posts we will dive deeper into individual components of the Biomedical Intelligence Cloud and discuss new technologies that enable them.

The first post in this series is about our Multiscale Maps. It is already available here.

Interested in learning more? Contact us for a free demo or drop us a line at info@data4cure.com.